Have you ever searched for something online and found the answer within seconds? It feels effortless, but behind that speed is a powerful system working non-stop.Search engines Constant explore the internet to gather information. They then organize this data so users can receive accurate results right away. In simple terms, search engines crawl websites to discover new content. This process helps them understand what each page offers.

But, launching a website alone does not guarantee visibility. If your pages are hard to access, search engines might overlook them. Ineffective structured pages can alsoremainunseen .As a result, your content might never appear in search results. This is why it’s important to understand how search engines crawl websites. Anyone looking to improve their online presence will gain from this knowledge.

In this guide, we will break down the entire process step by step. You will learn how search engines find pages, store data, and rank content. Moreover, you will learn practical strategies to make your website easier to find. These tips will also help your site become more competitive in search results.

How do search engines work?

Search engines act like smart assistants that organize the web. Rather than selecting pages haphazard, search engines follow a structured process. This ensures they deliver the most relevant and accurate results.

First, search engines crawl websites to locate new and updated pages. After that, they store the collected information in a large database. Finally, they analyze and rank pages based on relevance, usefulness, and quality.

Together with, search engines assess factors like page loading speed and mobile usability. They also consider how users interact with the website. So, SEO is more than only adding keywords. It is about providing a complete and optimized experience for users.

What is Crawling in SEO? Now That You Know, Optimize for It

Crawling is the foundation of SEO. It is the step where automated bots visit your site and explore its content.

When search engines crawl websites, they follow links from one page to another. This means your internal linking structure plays a big role. If your pages link clear, bots can navigate your site without confusion.

To improve crawling performance:

- Keep your website structure organized

- Fix broken or outdated links

- Ensure navigation is simple and clear

By doing this, you make it easier for bots to scan and understand your content.

How web crawling works for search engines

Search engines use specialized programs known as crawlers or spiders. These programs start with known URLs. They then follow links to find extra pages.

As they move across different websites, they gather information from each page. This process runs without stopping, allowing search engines to stay updated.

Because of this, search engines crawl websites routine. Websites that routine publish new content get crawled more often. Pages that get updated common also tend to get visited by search engine bots more.

Crawling: Can search engines find your pages?

Not every page on your site is without manual action discovered. Some pages remain hidden due to technical or structural issues.

This usually happens when:

- Pages are not inside linked

- Access blocked by settings

- Site navigation is confusing

To avoid these problems, make sure your website is well-structured. This allows search engines to full crawl your website. It also ensures that no important page goes unnoticed.

Tell search engines how to crawl your site

Website owners can guide crawlers using simple tools.

For example, a robots.txt file tells bots which sections of your site they can access. In contrast an XML sitemap provides a clear list of important pages.

When used properly, these tools make it easier for search engines to crawl your website. They also help focus on the most valuable content.

Common Crawling Problems (and Quick Fixes)

Even well-designed websites can face crawling issues.The great news is that most of these problems are simple to fix.

Common issues include:

- Broken or dead links

- Slow-loading pages

- Redirect chains or loops

To fix them:

- Perform regular site audits

- Improve page speed

- Simplify redirect structures

These steps ensure that search engines crawl websites smoothly without interruptions.

What is indexing?

After crawling, the next step is indexing. This is the stage where search engines save and arrange the data they gather.

After search engines crawl websites, they examine the content on each page. Then, they store this information in their database for future searches. This allows them to quickly retrieve relevant pages when users perform a search.

If your page isn’t indexed, it won’t be visible in search listings. Thus, proper indexing is critical for visibility.

How search engines store your page details

Search engines collect several types of information from each page, such as:

- Titles and headings

- Written content

- Images and media

- Internal and external links

This data helps search engines understand the purpose and relevance of your page.

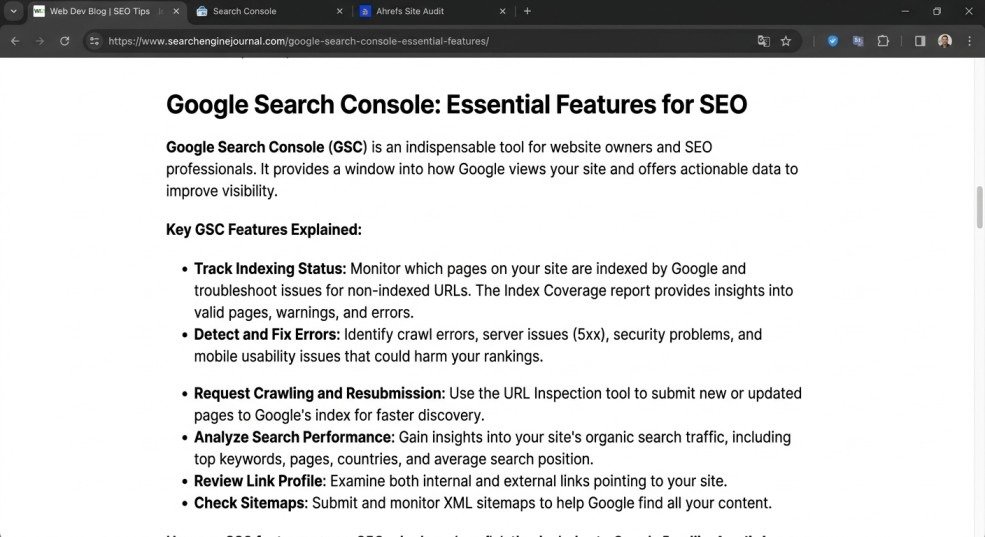

Google Search Console: A webmaster’s best friend

Google Search Console is a powerful tool for managing and monitoring your website.

It provides insights into how your site performs in search results.

With this tool, you can:

- Track indexing status

- Detect errors and issues

- Request crawling for new pages

Using it regularly helps ensure your website is properly processed by search engines.

Which Pages Should You Keep Hidden from Search Engines?

Not all pages are meant to appear in search results. Some pages may not provide value to users.

Examples include:

- Login or admin pages

- Duplicate versions of content

- Private or restricted sections

Blocking these pages allows search engines to concentrate on your most valuable content. This also improves the total SEO performance of your website.

What is search ranking?

Ranking is the final stage of the process. It determines the order in which pages appear in search results.

Search engines use many factors to rank content, including relevance, quality, and authority. Pages that better match user intent are placed higher.

How does Google decide which pages to show?

Google uses complex algorithms to select the best results. These systems analyze different signals to determine which pages deserve higher positions.

Key factors include:

- Content relevance

- Website authority

- User experience

If your page meets these criteria, it has a better chance of ranking well.

Content Quality and Relevance

Content plays a central role in SEO success. High-quality content attracts users and builds trust.

To improve your content:

- Write in a clear and engaging way

- Provide accurate and helpful information

- Focus on solving user problems

Strong content increases both engagement and rankings.

Make your site interesting and useful

User experience is only as important as content. A well-designed site keeps visitors engaged.

To improve usability:

- Use a clean and simple layout

- Add helpful visuals

- Ensure easy navigation

A better experience leads to longer visits and improved SEO performance.

Be Strategic with Internal Linking

Internal links connect your pages and create a strong structure.

They help users explore your site and allow bots to navigate effective. As a result, search engines can crawl websites more effective . They also gain a better understanding of the relationships between pages.

How long until I see impact in search results?

SEO is a long-term strategy. Results do not appear instantly.

You might notice some improvements within a few weeks. But, Significant growth usually takes several months. Consistency and patience are key.

Use the Query Arg Monitor to Eliminate Unnecessary Query Args

Extra URL parameters can confuse search engines and create duplicate content.

Removing unnecessary parameters helps keep your site clean and easier to process.

Manage Internal Site Search URLs

Internal search pages can generate many similar URLs. This can lead to duplication issues.

Managing these URLs suitable helps maintain a strong SEO structure.

Types of Search Engines Around the World Besides Google

While Google dominates the market, other search engines also exist, including:

- Bing

- Yahoo

- DuckDuckGo

Each platform has its own system, but the core principles remain similar.

How do Search Engines Make Money?

Search engines main earn revenue through advertising.

Businesses pay to display ads in search results. But, organic rankings are based on SEO, not paid promotions.

Ready to Optimize SEO? Collaborate with Dream box

Now that you understand the process, it is time to take action.

Improving your website step by step can lead to better results. If necessary, collaborating with experts can help you progress faster. It can also lead to stronger results.

Conclusion

Understanding how search engines crawl websites forms the foundation of effective SEO. Each step, from discovering pages to ranking them, is important.

Improving your site structure helps search engines understand your website better. This makes it easier for them to navigate all your pages efficiently. Enhancing your content quality helps provide value to users. Optimizing technical elements further boosts your chances of appearing in search results. Focus on delivering real value to your users. Also, maintain consistency across your entire website.

With time, these efforts will help your site grow steadily. You will attract more visitors and gradually achieve higher search rankings. Maintaining a strong SEO strategy ensures long-term success for your website.

FAQs

- How does JavaScript help search engines crawl websites?

JavaScript enables dynamic content to appear on a page. This helps search engines view interactive elements and updated information.

- How do popular search engines crawl and index websites?

Search engines send crawlers to visit web pages. These crawlers follow links and store content in the search index.

- How do different search engines Rank pages when crawling websites?

They Rank based on page authority, relevance, freshness, and internal/external linking structure.

- How do search engines find new web pages?

New pages are found through links from other websites. They are located through sitemaps and social sharing signals.

- Which companies provide the best tools for monitoring website crawling?

Google, SEMrush, Ahrefs, and Screaming Frog provide excellent tools for monitoring websites. These tools help track crawling and indexing well.

Mata description

Learn how search engines crawl websites and index content to boost your rankings. Optimize your site structure, content, and technical setup for better visibility. Start improving your SEO today.

- How do search engines work?

- What is Crawling in SEO? Now That You Know, Optimize for It

- How web crawling works for search engines

- Crawling: Can search engines find your pages?

- What is indexing?

- How search engines store your page details

- Google Search Console: A webmaster’s best friend

- Which Pages Should You Keep Hidden from Search Engines?

- What is search ranking?

- How does Google decide which pages to show?

- Content Quality and Relevance

- Make your site interesting and useful

- Be Strategic with Internal Linking

- How long until I see impact in search results?

- Use the Query Arg Monitor to Eliminate Unnecessary Query Args

- Manage Internal Site Search URLs

- Types of Search Engines Around the World Besides Google

- How do Search Engines Make Money?

- Ready to Optimize SEO? Collaborate with Dream box

- Conclusion

- FAQs

- Mata description